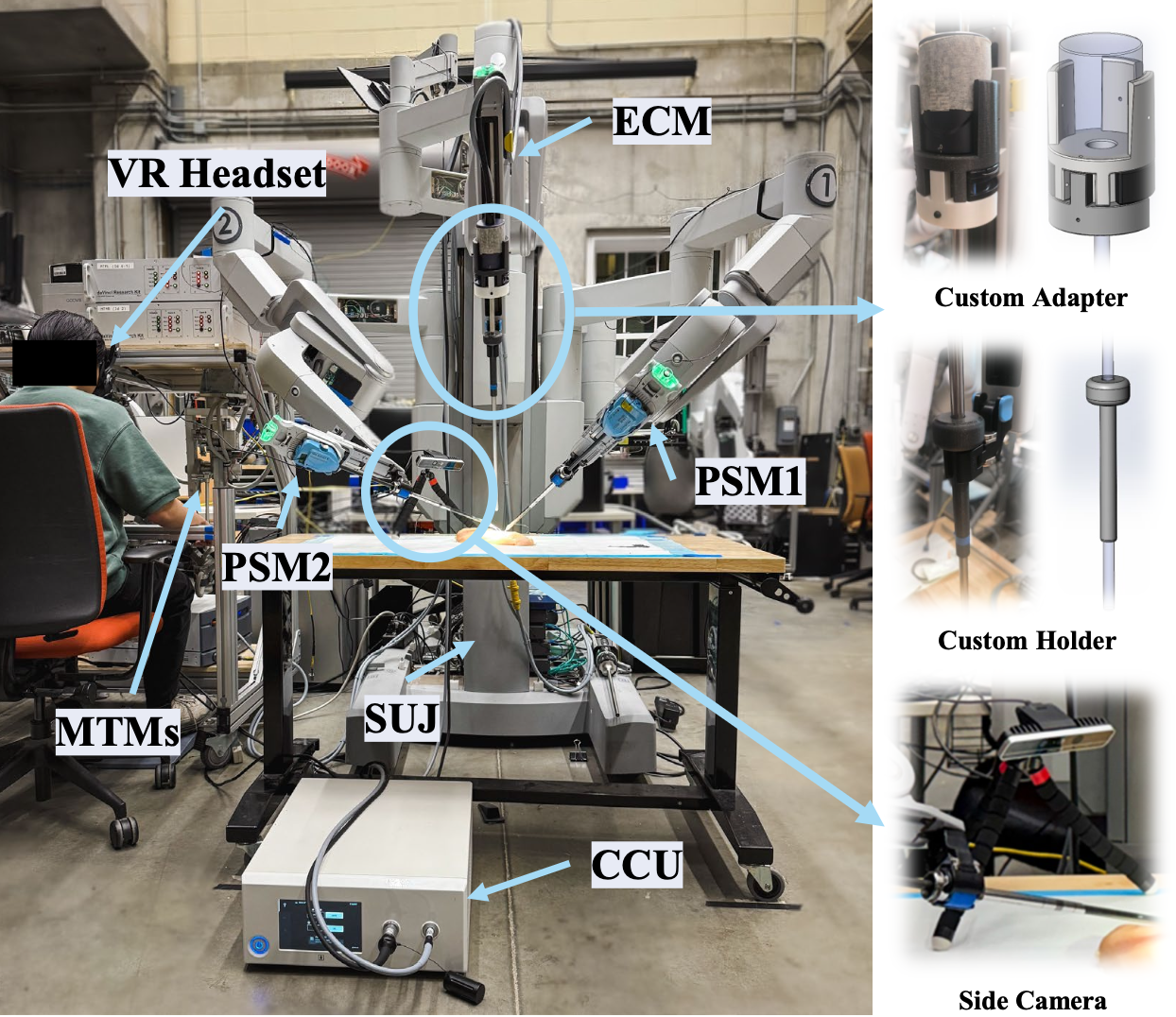

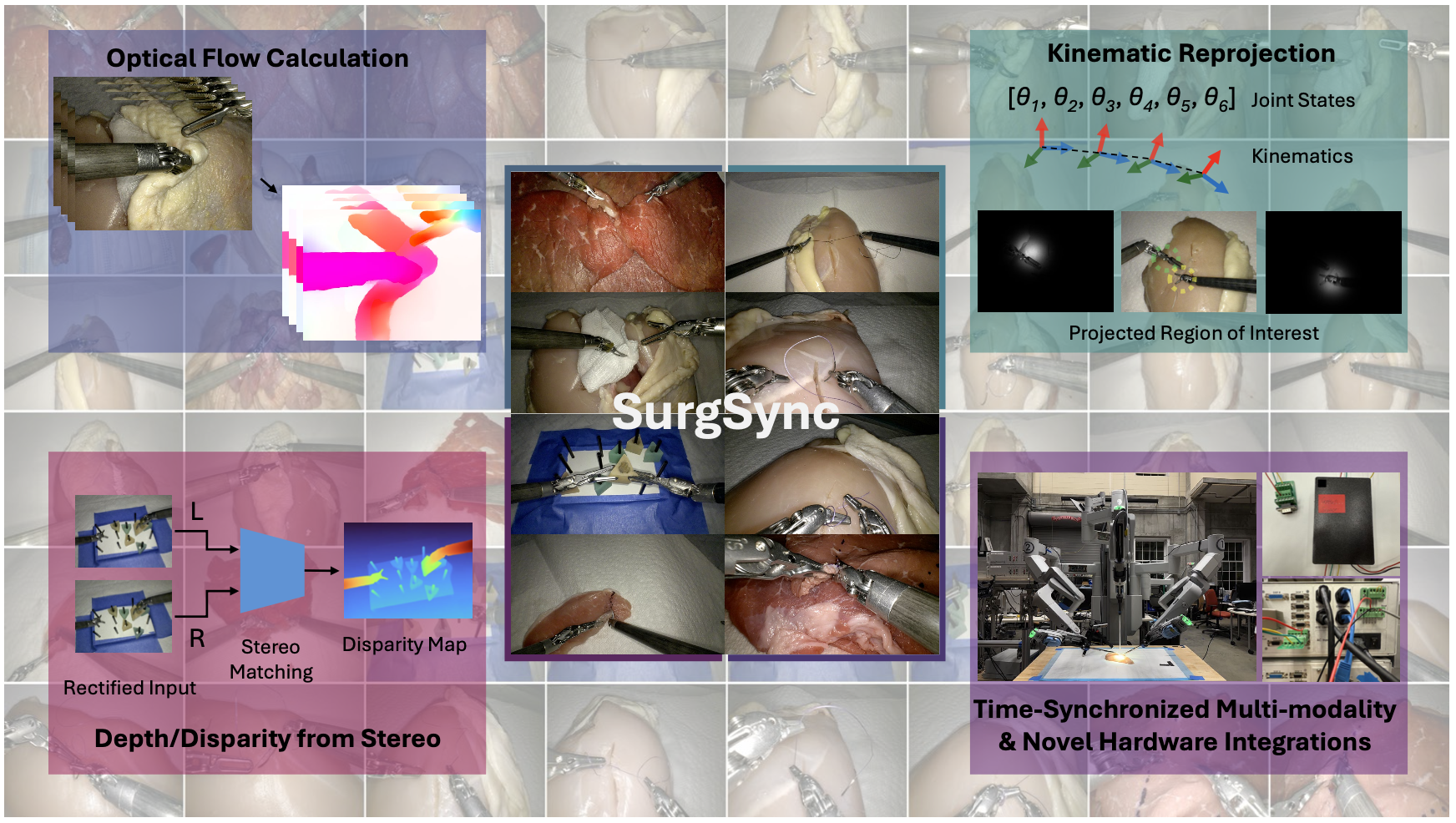

SurgSync: Time-Synchronized Multi-modal Data Collection Framework for Surgical Robotics

Motivation

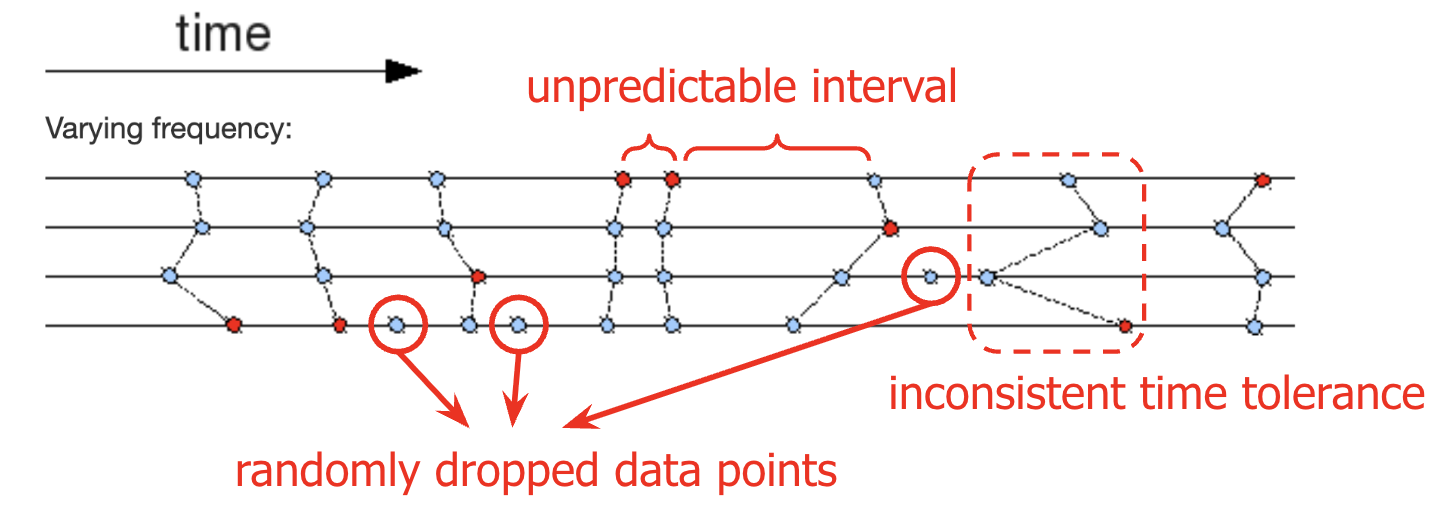

A key challenge faced by all teams conducting surgical robotics research: the collection of high-quality, synchronized multi-modal data. Issues with traditional ROS synchronization utilities such as message_filters::sync::ApproximateTime:

- Do not enforce strict time tolerance between data streams (matching is conducted on a best-effort basis)

- Large quantities of output are dropped, when recording many topics - for example, when 8 ROS topics mentioned above are simultaneously subscribed

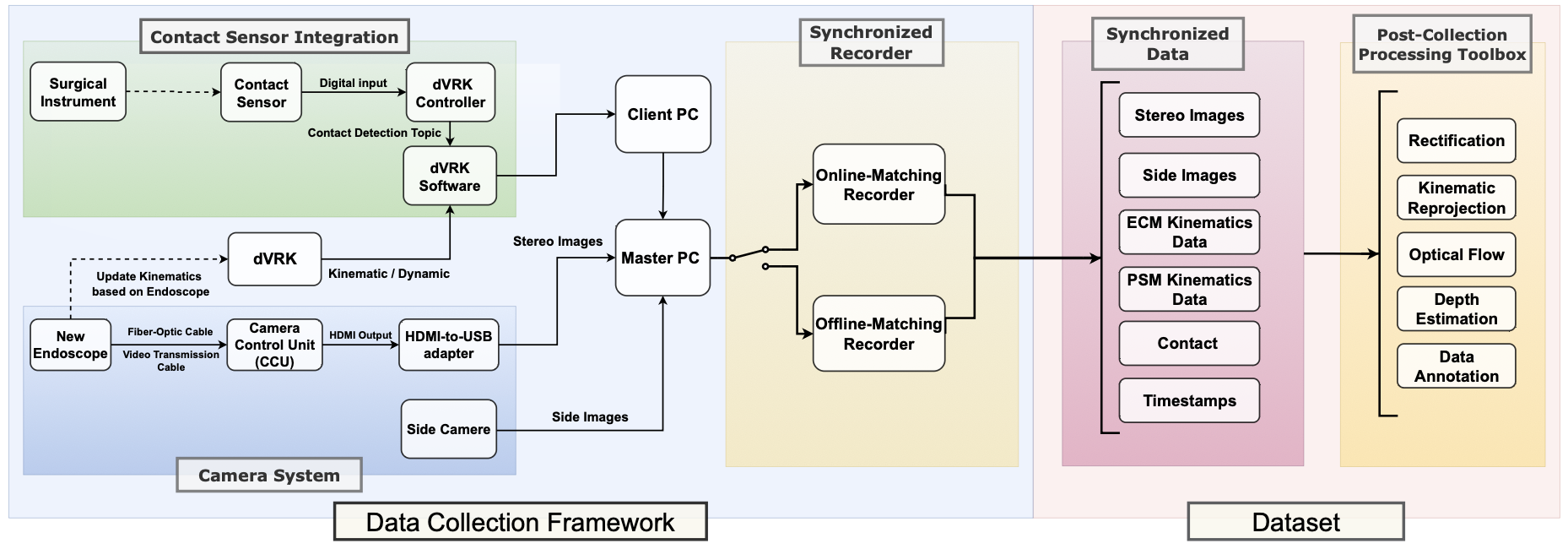

Summary of Our Data Pipeline

Synchronized Data Recorders

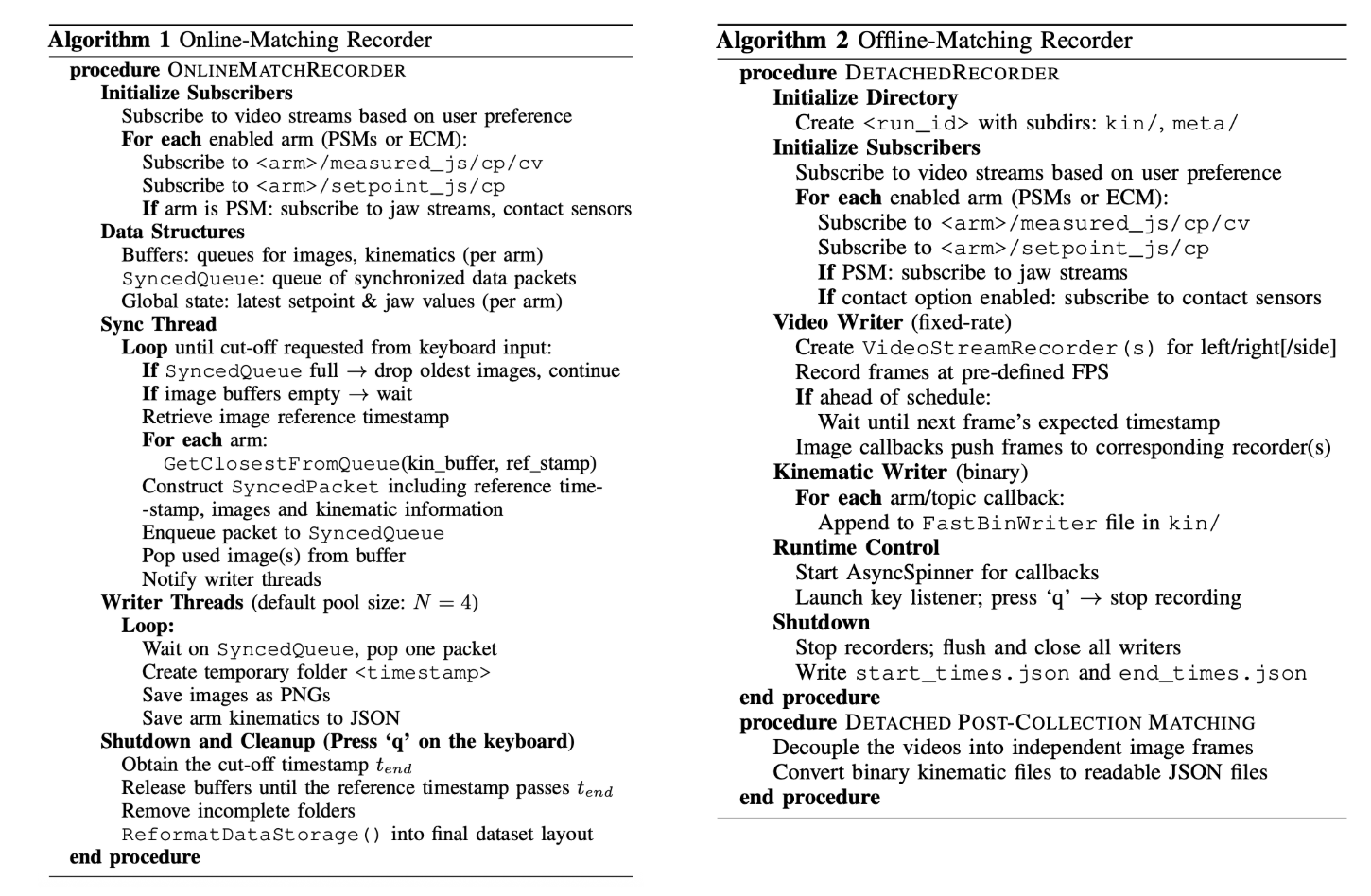

We designed and implemented two novel synchronized recorders in C++ compatible with both ROS1 and ROS2, which aim to record temporally aligned data streams across multiple sensing modalities:

- Online-Matching Recorder: The design enforces strict time synchronization using multi-threading: only samples that fall within a user-defined time tolerance are admitted. This yields slightly uneven inter-sample intervals (irregular ∆t), but it retains the natural continuity of smooth teleoperation segments and avoids label/feature drift. This design can be used for real-time scenarios.

- Offline-Matching Recorder: Our offline-matching ap- proach decouples recording from time alignments to maximize the recording system efficiency. It is the more suitable choice for offline learning workflows, where deterministic post-processing is preferable to on-the-fly data matching.

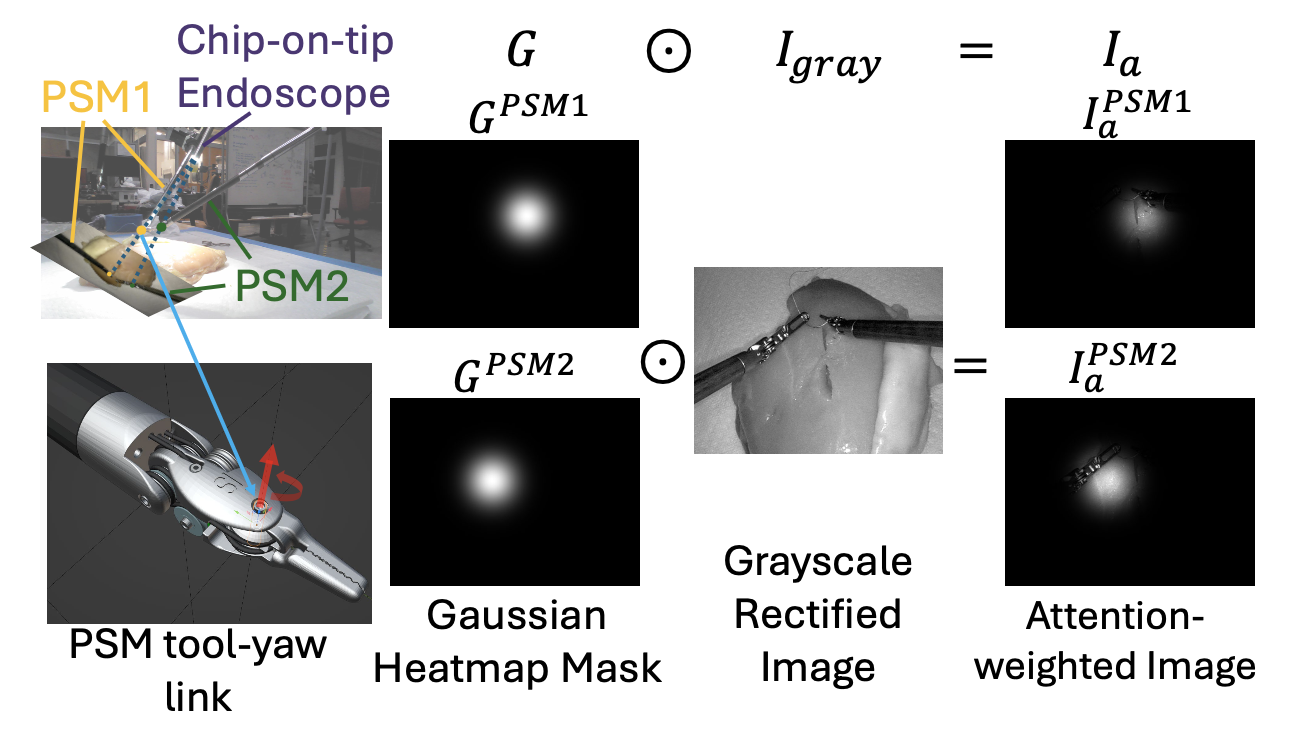

Kinematic Projection

We propose a novel approach using a Gaussian heatmap to project tool tip 3D position kinematic information to 2D gray-scale endoscopic images. This relies on a hand-eye calibration to correct for the well-known inaccuracy of the dVRK kinematics.

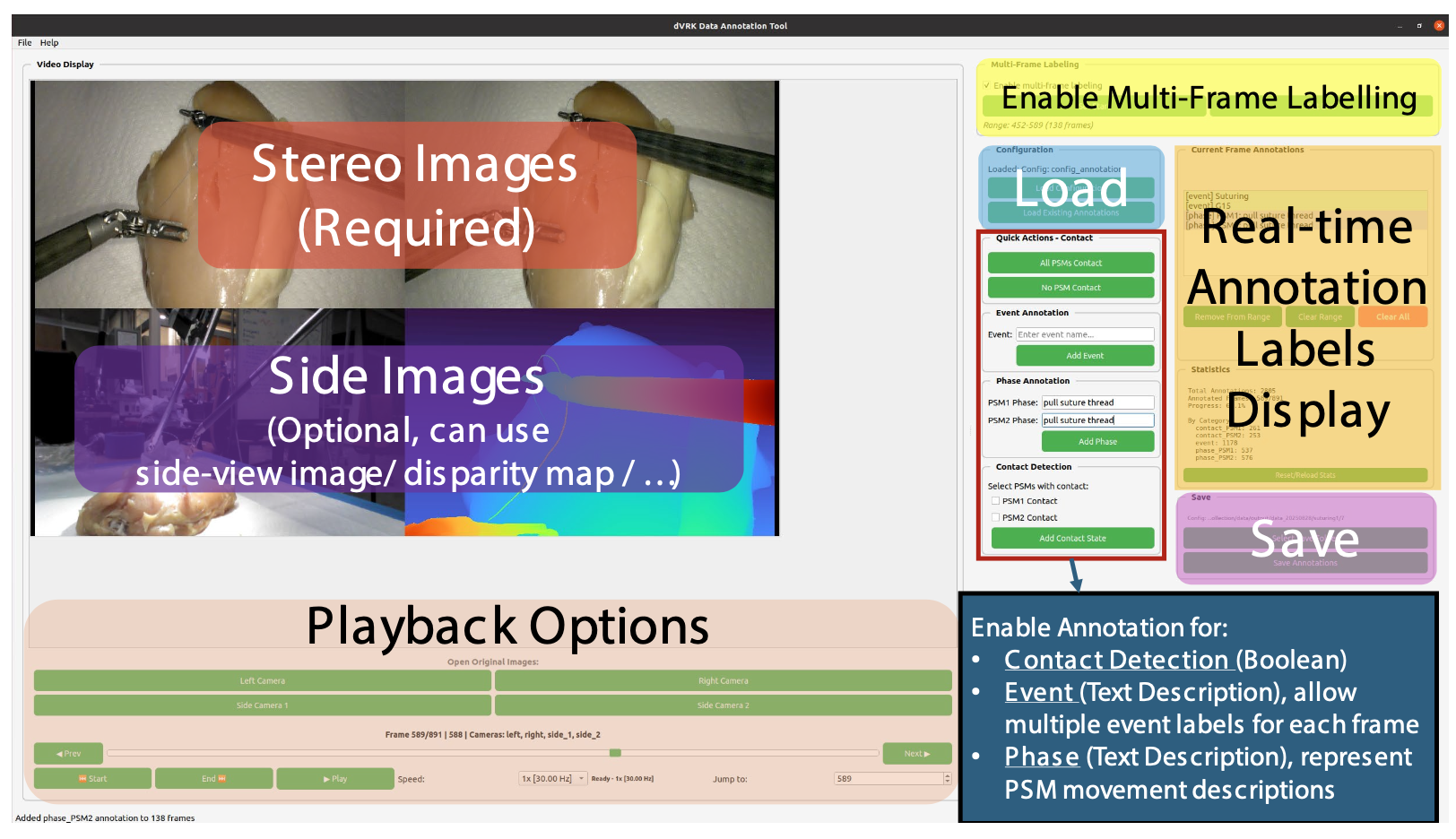

Data Annotation GUI / Depth Viewer

We developed a custom data annotator with Graphical User Interface (GUI) using PyQt as shown in the figure below, enabling users to label contact detection and event/phase description during playback of consecutive frames.